Operating Systems

Introduction

Before diving into the intricate world of operating systems, it’s essential to first understand the fundamental building blocks that form their core. In this article, we will explore, step by step, the vital components that make operating systems function seamlessly. As we move forward, we’ll uncover how these components work together to provide an efficient and stable environment. Join us on this journey to not only understand the individual parts but also to appreciate the interconnectedness that allows these intricate systems to function smoothly.

Table of Contents

What is a Process?

A process in an operating system can be thought of as a program in execution, serving as the fundamental unit of work within the system. It is, therefore, crucial for enabling the computer to multitask efficiently. To elaborate, each process is allocated its own memory space, system resources, and execution context. These components ensure that each process operates independently, without interfering with others. As a result, processes empower an operating system to perform multiple tasks simultaneously, making it a key factor in the overall functionality and responsiveness of the system.

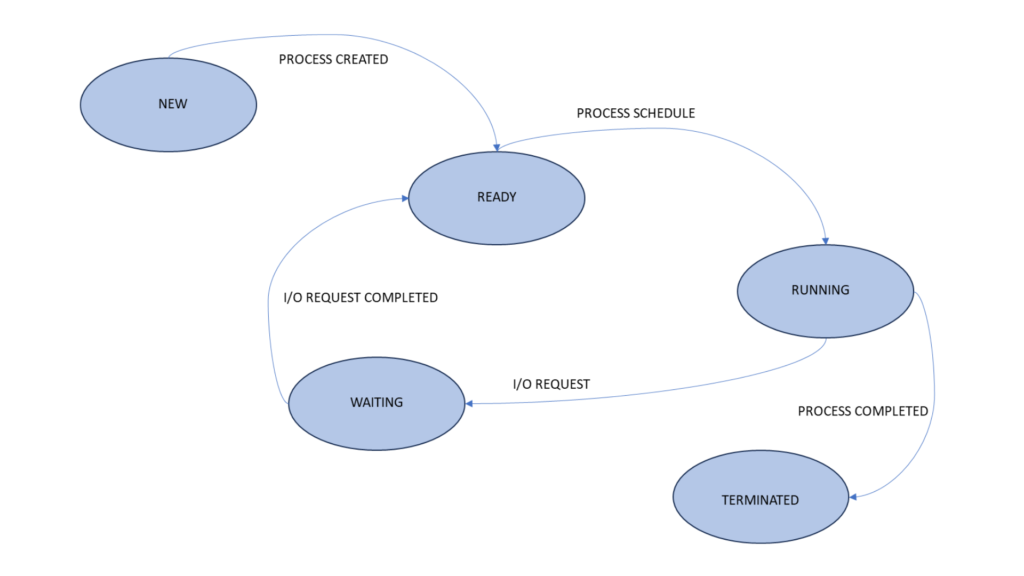

Process States

Processes can exist in several states, each signifying a different phase in their execution. These states are:

- New

- Ready

- Running

- Waiting

- Terminated

Understanding these states is crucial for effective process management.

Process Control Block (PCB)

A PCB is a data structure that contains detailed information about an individual process in an operating system. It serves as a critical link between the operating system and the running processes, enabling the OS to maintain, monitor, and manipulate processes effectively.

Elements of a PCB

A typical PCB contains a wealth of information about a process. Here are some of the key elements that you’ll find in a Process Control Block:

| Process ID |

| Process State |

| Program Counter (PC) |

| CPU Registers |

| Priority |

| Memory Management Information |

1. Process ID (PID)

Each process in an operating system is assigned a unique identifier known as the Process ID (PID). The PID is crucial for the operating system to distinguish between different processes. It’s a numeric or alphanumeric value that is typically unique for each process.

2. Process State

Processes can be in various states, including running, ready, waiting, or terminated. The PCB keeps track of the current state of a process. This information is essential for process scheduling and resource allocation.

3. Program Counter (PC)

The Program Counter (PC) is a register that stores the memory address of the next instruction to be executed by the process. When a process is scheduled to run, the operating system uses the PC value from the PCB to resume its execution seamlessly.

4. CPU Registers

A PCB typically contains a snapshot of the CPU registers of a process. These registers include general-purpose and special-purpose registers. Saving and restoring the register values from the PCB is essential for maintaining the process’s state during context switches.

5. Priority

Processes often have different priorities. The PCB stores information about a process’s priority level, which is used by the operating system’s scheduler to determine the order in which processes are executed.

6. Memory Management Information

Information related to memory allocation and usage is also stored in the PCB. This data is vital for ensuring that a process has access to the required memory resources and preventing conflicts.

The Role of the PCB in Process Management

The PCB plays a central role in process management within an operating system. When a process is created or initiated, a new PCB is created to store its information. The PCB is linked to an entry in the Process Table, which acts as a directory of all active processes. Together, the PCB and the Process Table enable the operating system to perform tasks such as process scheduling, context switching, and resource allocation efficiently.

Understanding Process Scheduling

When you power on your computer, the operating system is responsible for allocating and managing resources for various processes. But how does it decide which process gets the CPU time and when? This is where process scheduling comes into play.

The Importance of Process Scheduling

Effective process scheduling is vital to ensure that the CPU is utilized optimally. Without proper scheduling, a system could become unresponsive, inefficient, or even crash.

Objectives of Process Scheduling

Process scheduling aims to achieve several critical objectives:

- Fairness: Ensuring each process gets a fair share of CPU time.

- Efficiency: Maximizing CPU utilization to complete tasks swiftly.

- Responsiveness: Reducing the waiting time for processes to execute.

Types of Process Scheduling

There are various types of process scheduling algorithms, each with its own set of rules and priorities. Let’s explore some of the most common ones:

First-Come, First-Served (FCFS) Scheduling

In FCFS scheduling, processes are executed in the order they arrive. This simple approach can lead to a problem known as the “convoy effect,” where a short process gets stuck behind a long one.

Shortest Job Next (SJN) Scheduling

The SJN scheduling algorithm prioritizes the shortest jobs first. It minimizes waiting time but requires knowledge of the execution time of each process in advance.

Round Robin (RR) Scheduling

RR scheduling allocates a fixed time slice (quantum) to each process in a circular order. It’s a balance between fairness and responsiveness.

Priority-Based Scheduling

In this approach, each process is assigned a priority, and the CPU is allocated to the process with the highest priority. It’s essential for real-time systems and mission-critical applications.

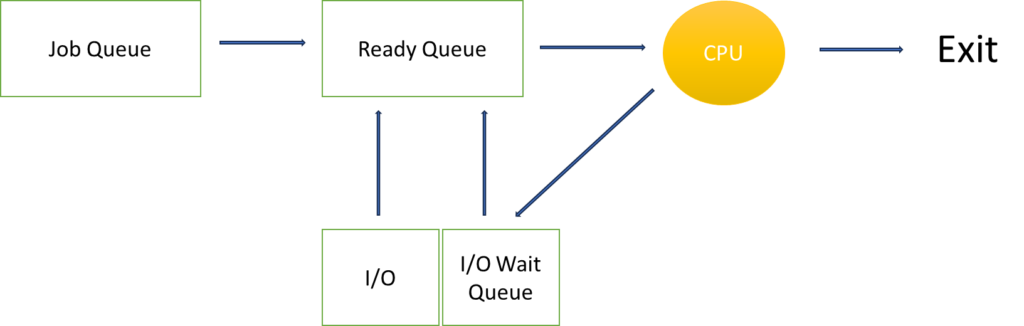

Process Scheduling Queues

To manage the execution of processes, an operating system maintains various queues. These queues help in organizing processes based on their status and priority.

1.Ready Queue

The ready queue contains all the processes that are ready to execute but are waiting for CPU time.

2.Job Queue

The job queue consists of all the processes residing in the system, whether they are in memory or on disk.

3.Device Queue

Some processes may be waiting some I/O operation, such processes are in the device queue.

Threads and Multithreading

1. Introduction

Multithreading is a fundamental concept in the world of operating systems (OS). It plays a crucial role in enhancing the efficiency and performance of modern computing systems. In this article, we’ll explore the intricacies of threads and multithreading in operating systems, shedding light on their significance and applications.

2. What Are Threads?

Threads are the smallest unit of a program that can be executed independently. They are like lightweight processes within a program and share the same memory space. Each thread in a program has its own set of registers and stack but shares data and code with other threads.

3. The Need for Multithreading

The need for multithreading arises from the increasing demand for multitasking and concurrency in computing. With the advent of multi-core processors, the ability to execute multiple threads simultaneously has become paramount. This leads to improved responsiveness and better resource utilization.

4. Advantages of Multithreading

Multithreading offers several advantages, including:

- Improved Performance: By dividing tasks into threads, the CPU can execute them in parallel, resulting in faster execution.

- Resource Sharing: Threads share memory and resources, making data exchange between them more efficient.

- Responsiveness: Multithreading allows a program to remain responsive, even when some threads are performing time-consuming tasks.

- Simplified Programming: Writing multithreaded applications can simplify complex problems by breaking them into smaller, manageable threads.

5. Multithreading vs. Multiprocessing

It’s important to distinguish between multithreading and multiprocessing. Multithreading involves multiple threads within a single process, while multiprocessing involves multiple processes, each with its own memory and resources. Multithreading is more lightweight and is ideal for tasks that can be parallelized within a program.

6. Thread States

Threads can be in various states, such as running, ready, or blocked. Understanding these states is crucial for efficient thread management and synchronization.

7. Synchronization

Thread synchronization is vital to avoid conflicts and race conditions when multiple threads access shared resources. Techniques like mutexes and semaphores are used to ensure data integrity.

8. Thread Priority

Threads can have different priorities, which determine their order of execution. Priority levels help in optimizing the allocation of resources.

9. Thread Safety

Writing thread-safe code is a challenge. Developers must ensure that threads don’t interfere with each other, leading to data corruption or program crashes.

10. How Multithreading Works

To understand how multithreading works, we’ll delve into concepts like thread creation, scheduling, and context switching.

11. Applications of Multithreading

Multithreading finds applications in various domains, including web servers, gaming, multimedia processing, and scientific simulations. It’s a critical component of modern software development.

12. Challenges in Multithreading

Developers face challenges like deadlocks, race conditions, and thread starvation when working with multithreading. We’ll explore these challenges in detail.

13. Multithreading in Different Operating Systems

Multithreading is implemented differently in various operating systems, such as Windows, Linux, and macOS. Each OS provides its own thread management mechanisms.

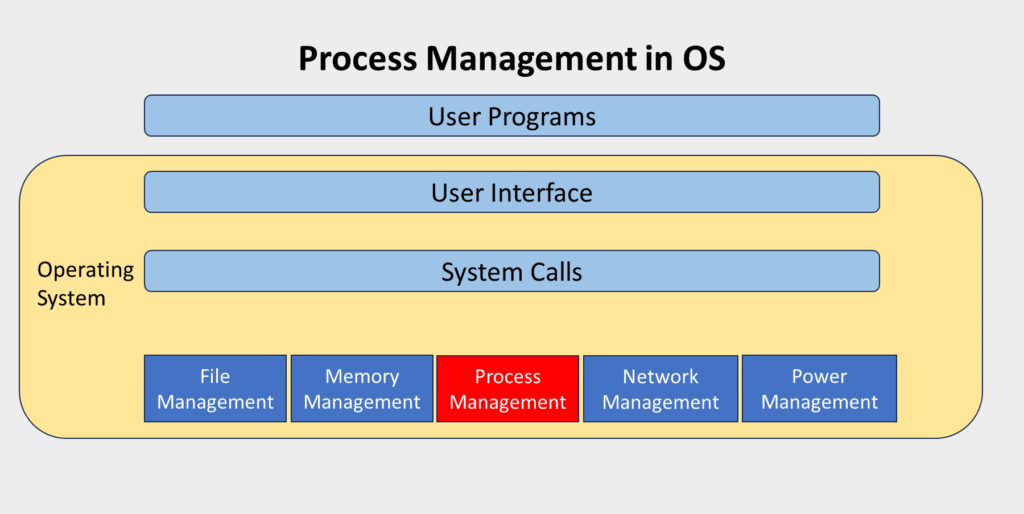

Process Synchronization

When multiple processes share resources, conflicts can arise. Process synchronization is the key to preventing data corruption and ensuring that processes work together harmoniously.

Deadlocks

Deadlocks can bring an operating system to a standstill. Discover what deadlocks are, how they occur, and strategies to prevent or resolve them.

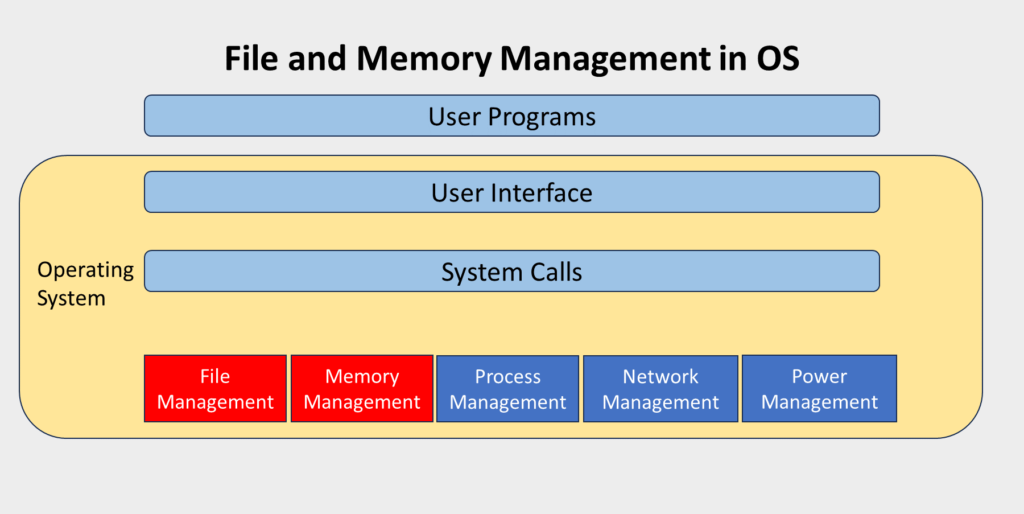

Memory Management Process

Memory management is a core function of operating systems, responsible for ensuring that computer memory is utilized efficiently and effectively. This article provides an overview of the memory management process in operating systems, covering memory allocation, address translation, memory protection, and more.

Memory Allocation

1. Contiguous Memory Allocation:

In this method, each process is allocated a single, contiguous block of memory. It’s a straightforward approach but can lead to fragmentation problems – both external (unused memory between allocated blocks) and internal (unused memory within a block).

2. Paging:

Paging divides memory into fixed-sized blocks called pages. Processes are divided into equal-sized blocks called frames. This method eliminates external fragmentation, making efficient use of memory.

3. Segmentation:

Segmentation divides memory into logical segments that can vary in size. Each segment corresponds to a different part of a program or data. This approach provides more flexibility in memory allocation but can still lead to fragmentation issues.

Virtual Memory

1. What is Virtual Memory?

Virtual memory is a memory management technique that uses both primary (RAM) and secondary memory (like hard drives) to provide the illusion of a larger, contiguous memory space. It allows processes to use more memory than is physically available by swapping data between RAM and secondary storage.

2. Page Tables:

To implement virtual memory, each process has a page table that maps its virtual memory addresses to physical memory addresses. This mapping enables the operating system to control where data is stored in RAM and secondary storage.

3. Page Replacement Algorithms:

When there’s not enough physical memory to hold all the pages a process requires, page replacement algorithms come into play. These algorithms determine which pages should be swapped between RAM and secondary storage. Common algorithms include FIFO (First-In-First-Out), LRU (Least Recently Used), and LFU (Least Frequently Used).

Address Translation

Address translation is the process of converting virtual memory addresses used by programs into physical memory addresses where data is actually stored. This translation is done through the page table associated with each process. The operating system handles this translation transparently for processes.

Memory Protection

Memory protection is a crucial aspect of memory management that ensures the integrity and security of the operating system and the processes it runs. It prevents one process from accessing or modifying the memory space of another process. This is achieved through hardware features and memory management units.

Fragmentation

Fragmentation is a common challenge in memory management. It comes in two forms:

1. External Fragmentation:

This occurs when there’s unused memory between allocated blocks of memory. Compaction is one approach to address external fragmentation, which involves moving processes in memory to create larger contiguous blocks.

2. Internal Fragmentation:

Internal fragmentation happens when there’s unused memory within a block allocated to a process. Proper memory allocation methods can help minimize internal fragmentation.

Input/Output (I/O) Operations

What Are I/O Operations?

I/O operations involve the exchange of data and instructions between a computer’s central processing unit (CPU) and various hardware devices. These devices can include keyboards, displays, disks, network cards, and more.

The Role of I/O Devices

I/O devices act as intermediaries between the software and the hardware. They enable users and applications to interact with the computer system, whether it’s through input devices like keyboards or output devices like displays and printers.

I/O Operations and System Calls

System Calls for I/O

I/O operations are typically initiated through system calls. System calls are interfaces provided by the operating system that allow user programs to request I/O services. Common system calls for I/O include read(), write(), and open().

Device Drivers

Device drivers are essential software components that enable the operating system to communicate with various hardware devices. They serve as intermediaries, translating generic operating system commands into device-specific instructions.

Blocking and Non-blocking I/O

Blocking I/O

In blocking I/O operations, when a process requests data from a device, it is blocked or suspended until the data is available. This can lead to inefficiencies, especially in multi-programming environments.

Non-blocking I/O

Non-blocking I/O allows a process to request data from a device and continue executing other tasks without waiting. While this can improve efficiency, it often requires additional programming complexity to handle asynchronous I/O.

Direct Memory Access (DMA)

Direct Memory Access (DMA) is a feature that allows certain hardware devices to read from or write to memory without CPU intervention. DMA enhances I/O efficiency by reducing the burden on the CPU and speeding up data transfer.

Buffering

Buffering is a technique that involves temporarily storing data in memory before or after an I/O operation. It is used to mitigate speed mismatches between I/O devices and the CPU and enhance overall system performance.

I/O Scheduling

I/O scheduling algorithms determine the order in which pending I/O requests are serviced. These algorithms aim to optimize disk performance by reducing seek times and ensuring fair access to I/O devices.

Security and Processes

Security is a paramount concern in modern operating systems. This section will touch upon the security aspects related to processes, including access control and privilege management.

What is Memory Management?

Memory management is the process of handling computer memory, including the allocation and deallocation of memory resources. It plays a vital role in ensuring that a computer’s memory is used effectively to support the execution of programs. Proper memory management is crucial for the stable and efficient operation of an operating system.

Why is Memory Management Important?

Efficient memory management is essential for several reasons:

- It ensures that each program gets the necessary memory space to run without interference from other programs.

- It helps prevent memory leaks and unauthorized access to memory locations.

- It optimizes the use of physical memory and reduces memory wastage.

Types of Memory in a Computer

Computers have multiple types of memory, with each serving a specific purpose. The primary ones include:

Primary Memory

RAM (Random Access Memory)

- RAM is volatile memory used for storing data that the CPU needs while executing programs.

- It provides fast access to data but loses its content when the computer is turned off.

ROM (Read-Only Memory)

- ROM is non-volatile memory that stores critical firmware and instructions.

- It retains data even when the power is off.

Secondary Memory

Secondary memory is non-volatile and used for long-term data storage. Common types include:

Hard Disk Drives (HDD)

- HDDs store data on spinning disks, providing ample storage capacity at a lower cost.

Solid-State Drives (SSD)

- SSDs use flash memory and are faster and more reliable than HDDs.

Optical Drives

- Optical drives use CDs, DVDs, and Blu-ray discs for data storage.

What is Virtual Memory?

Virtual memory is a memory management technique that extends the computer’s RAM by using a portion of the hard drive as additional memory. This allows larger programs to run even when physical RAM is limited. The operating system plays a critical role in managing the transfer of data between RAM and the hard drive, swapping data in and out of the hard drive as needed. This technique ensures that applications can continue to function smoothly without crashing due to insufficient memory, despite the physical limitations of the system’s RAM.

Role of Memory Management in Operating Systems

Memory management is a crucial function in an operating system. It oversees various processes, including memory allocation, memory protection, and memory sharing. The operating system is responsible for ensuring that programs do not interfere with each other’s memory space.

File Systems: Organizing and Accessing Data

What Is a File System?

A file system is a framework that dictates how data is stored and retrieved on your computer’s storage devices, such as hard drives, SSDs, and USB drives. It acts as an intermediary, facilitating communication between the operating system and the physical storage hardware.

File systems are the third pillar of operating systems. They provide a structured and organized way to store, retrieve, and manage data. File systems are responsible for:

- File Storage: They determine how data is stored on storage devices like hard drives and SSDs.

- File Naming: File systems dictate the rules for naming files and folders, ensuring consistency and order.

- Data Access: They provide a way to access and modify data efficiently.

File systems are essential for maintaining the integrity of data and ensuring that it’s easily accessible to both the user and the operating system.

Key Components of a File System

To fully grasp the functioning of a file system, let’s break it down into its primary components.

1. Files

Files are the fundamental units of data storage in a file system. They can contain a wide range of data types, such as documents, images, videos, or any other form of information that a computer processes. Moreover, each file is identified by a unique name and extension, which helps the operating system determine its format and the appropriate application to handle it. Additionally, files play a crucial role in organizing and managing the vast amounts of data used daily, ensuring efficient access, storage, and retrieval within a structured computing environment.

2. Directories

Directories are containers that house files and other directories, enabling a structured and hierarchical organization of data. By grouping related files together, they simplify categorization and facilitate efficient data access. Moreover, this system of organization enhances storage management by reducing clutter and maintaining order. Consequently, directories ensure quick navigation to desired files or subdirectories, ultimately improving overall system efficiency and user experience.

3. Metadata

Metadata contains crucial information about files, such as their size, creation date, modification history, and permissions. Moreover, it plays a pivotal role in managing, organizing, and securing data by helping the operating system track, index, and control file access efficiently. Furthermore, metadata enhances searchability and optimizes system performance by providing detailed insights into a file’s attributes without requiring access to its actual content. Consequently, it enables faster file retrieval, improved security, and seamless data management across various computing environments.

4. File Paths

File paths function like addresses for files on your system, providing a unique route to access specific files within the directory structure. Moreover, they enable the operating system and applications to efficiently locate, organize, and manage data. Whether absolute or relative, file paths play a crucial role in navigation by ensuring seamless file retrieval and smooth system operations. Consequently, understanding file paths is essential for effective file management and troubleshooting in any computing environment.

Popular File Systems

There are various file systems in use today, each with its own set of features and compatibility. Here are some of the most popular ones:

1. NTFS (New Technology File System)

Developed by Microsoft, NTFS is the standard file system for Windows operating systems. Notably, it provides advanced features such as encryption, compression, and access control, thereby enhancing both security and performance. Furthermore, NTFS supports large file sizes, disk quotas, and journaling, which help maintain data integrity and prevent corruption. Consequently, it remains a reliable and efficient choice for modern computing needs, ensuring stability and seamless file management across Windows systems.

2. Ext4

Ext4, the fourth extended file system, is primarily used in Linux distributions. Notably, it is renowned for its reliability, scalability, and improved performance compared to its predecessors. Moreover, Ext4 supports larger file sizes, faster file allocation, and journaling, which not only enhances efficiency but also helps prevent data corruption. Consequently, it remains a widely preferred choice for modern Linux-based systems, ensuring stability and optimal performance in various computing environments.

3. APFS (Apple File System)

APFS (Apple File System) is specifically designed for Apple’s macOS, iOS, watchOS, and tvOS. Notably, it is highly optimized for flash and SSD storage, thereby ensuring both high performance and efficiency. Moreover, APFS introduces advanced features such as strong encryption, space sharing, and fast directory sizing, which collectively enhance security, reliability, and data integrity. Consequently, it significantly improves system responsiveness and streamlines data management across all Apple devices, ultimately providing a seamless and efficient user experience.

Conclusion

In the world of operating systems, processes, memory management, and file systems serve as the unsung heroes. Indeed, they work tirelessly behind the scenes, seamlessly ensuring a reliable and efficient platform for all your computing needs. Moreover, by working together, they help optimize system performance, prevent crashes, and enhance overall stability. Consequently, understanding these fundamental building blocks is crucial, as it allows us to appreciate both the complexity and elegance of modern operating systems. Therefore, the next time you interact with your computer, take a moment to recognize the essential components that continuously work in harmony to make everything run smoothly.

FAQs

1. What is the main role of an operating system?

An operating system functions as a crucial intermediary between users and computer hardware by effectively managing resources and ensuring seamless communication. Moreover, it not only coordinates various system components but also allocates processing power and handles memory distribution. Consequently, it enables software applications to run efficiently, thereby providing a stable and user-friendly computing experience.

2. Why is memory management important in an operating system?

Memory management plays a crucial role in ensuring the efficient use of computer memory. Specifically, it dynamically allocates and deallocates memory as needed, thereby preventing conflicts and memory leaks. Furthermore, effective memory management not only enhances system performance and stability but also improves multitasking capabilities. As a result, it ultimately provides a smoother and more responsive user experience.

3. What are some popular operating systems?

Some of the most popular operating systems include Windows, Linux, and macOS. Notably, each of these systems offers unique features and advantages. For instance, many individuals and businesses choose Windows for personal and professional computing because of its user-friendly interface and extensive software compatibility. In contrast, developers and IT professionals prefer Linux for its open-source flexibility, strong security, and extensive customization options. Meanwhile, creative professionals rely on macOS for its seamless integration with Apple’s ecosystem, which ensures a smooth and optimized user experience.

4. How do processes, memory, and file systems interact in an operating system?

Processes actively interact with memory and file systems to execute tasks efficiently. As a result, they help manage resources effectively, thereby preventing bottlenecks and ensuring seamless operation. Furthermore, this interaction not only optimizes system performance but also enhances stability, ultimately maintaining a smooth and responsive computing experience.

5. What does the future hold for operating systems?

As technology continues to evolve, operating systems will inevitably adapt to new challenges and opportunities. Consequently, they will enhance their capabilities to meet growing demands and improve overall efficiency. Moreover, this continuous adaptation not only ensures sustained functionality but also drives further innovation. As a result, operating systems remain efficient, secure, and user-friendly, effectively keeping pace with the ever-changing digital landscape.